OpenSprinkler › Forums › Comments, Suggestions, Requests › Zimmerman parameters › Reply To: Zimmerman parameters

Peter

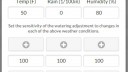

I have been using the Zimmerman method for a while now but the baseline weather conditions (Temp = 70F, Humidity = 30% and Rain = 0) aren’t a great match for London, UK where average temp is 50F and Humidity is up around 80%. I have been using a 0% sensitivity setting on temp/humidity to pretty much ignore any weather fluctuations except for rainfall.

But over the weekend, I modified the UI and weather server script to accept a custom baseline for my location. In essence allowing me to set the reference temp/humidity/rain to average London conditions. This means that I can set my program duration to an “average day” scenario and have Zimmerman adjust for daily offsets in all three variables.

Be interesting to see how it works out in practice but back testing on a year’s worth of historical data suggests a much better fit. The code changes are pretty minimal and backwards compatible. Curious, if this is of interest to others ?